Deploy A Serverless Ml Inference Using Fastapi Aws Lambda And Api Hello, guys, i am spidy. i am back with another video. this tutorial guides you through the process of building a serverless inference for more. Deploy and scale ml models without configuring or managing any of the underlying infrastructure with amazon sagemaker serverless inference.

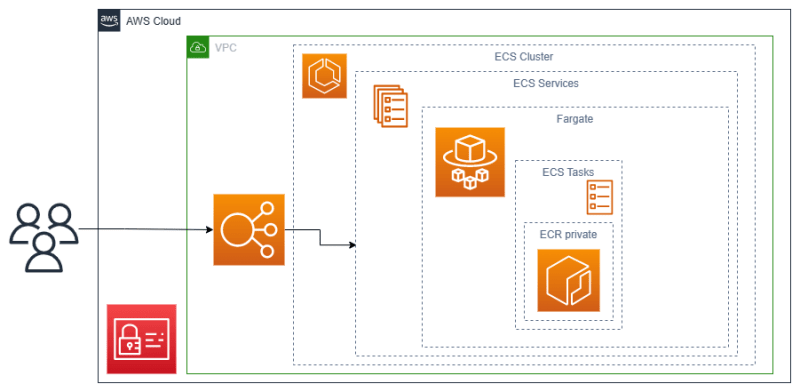

Deploy A Serverless Ml Inference Using Fastapi Aws Lambda And Api Module 4: build an http api using amazon api gateway and aws lambda after deploying the model to a fully managed amazon sagemaker inference endpoint, you are ready to build an http api to allow web client applications to perform inferences. Serverless components such as api gateway and lambda will help us in invoking the endpoint and exposing the model over api. the steps of this tutorial are fairly straightforward:. For this notebook we’ll be working with the sagemaker xgboost algorithm to train a model and then deploy a serverless endpoint. we will be using the public s3 abalone regression dataset for this example. In this post, we will go a step further and automate the deployment of such serverless inference service using amazon sagemaker pipelines. with sagemaker pipelines, you can accelerate the delivery of end to end ml projects.

Tutorial Host A Serverless Ml Inference Api With Aws Lambda And Amazon For this notebook we’ll be working with the sagemaker xgboost algorithm to train a model and then deploy a serverless endpoint. we will be using the public s3 abalone regression dataset for this example. In this post, we will go a step further and automate the deployment of such serverless inference service using amazon sagemaker pipelines. with sagemaker pipelines, you can accelerate the delivery of end to end ml projects. The team turned to serverless ml inference, hybridizing aws lambda for initial filtering and sagemaker endpoints for deep analysis. they trained an xgboost model on 10m labeled transactions using sagemaker processing, achieving 96% accuracy on imbalanced data via smote augmentation. The article presents a comprehensive guide for deploying a machine learning model using amazon sagemaker, which involves creating a model endpoint, invoking the model using aws lambda, and exposing it via amazon api gateway. In this guide, you’ll learn how to build and deploy a serverless chatbot that uses an llm hosted on aws sagemaker and a lambda function to serve user inputs. the chatbot will be accessible via api gateway and can be tested using postman or integrated into a frontend. You can use aws lambda in conjunction with amazon api gateway to create a serverless api that invokes your amazon sagemaker endpoint. here is a high level overview of the next steps you can take to achieve this:.

Tutorial Host A Serverless Ml Inference Api With Aws Lambda And Amazon The team turned to serverless ml inference, hybridizing aws lambda for initial filtering and sagemaker endpoints for deep analysis. they trained an xgboost model on 10m labeled transactions using sagemaker processing, achieving 96% accuracy on imbalanced data via smote augmentation. The article presents a comprehensive guide for deploying a machine learning model using amazon sagemaker, which involves creating a model endpoint, invoking the model using aws lambda, and exposing it via amazon api gateway. In this guide, you’ll learn how to build and deploy a serverless chatbot that uses an llm hosted on aws sagemaker and a lambda function to serve user inputs. the chatbot will be accessible via api gateway and can be tested using postman or integrated into a frontend. You can use aws lambda in conjunction with amazon api gateway to create a serverless api that invokes your amazon sagemaker endpoint. here is a high level overview of the next steps you can take to achieve this:.