Rag Evaluation This article provides a deep dive into seven typical rag failure points and the evaluation metrics with practical coding examples. the anatomy of rag breakdown – 7 failure points (fps) according to researchers barnett et al., retrieval augmented generation (rag) systems encounter seven specific failure points (fps) throughout the pipeline. An instruction (might include an input inside it), a response to evaluate, a reference answer that gets a score of 5, and a score rubric representing a evaluation criteria are given.

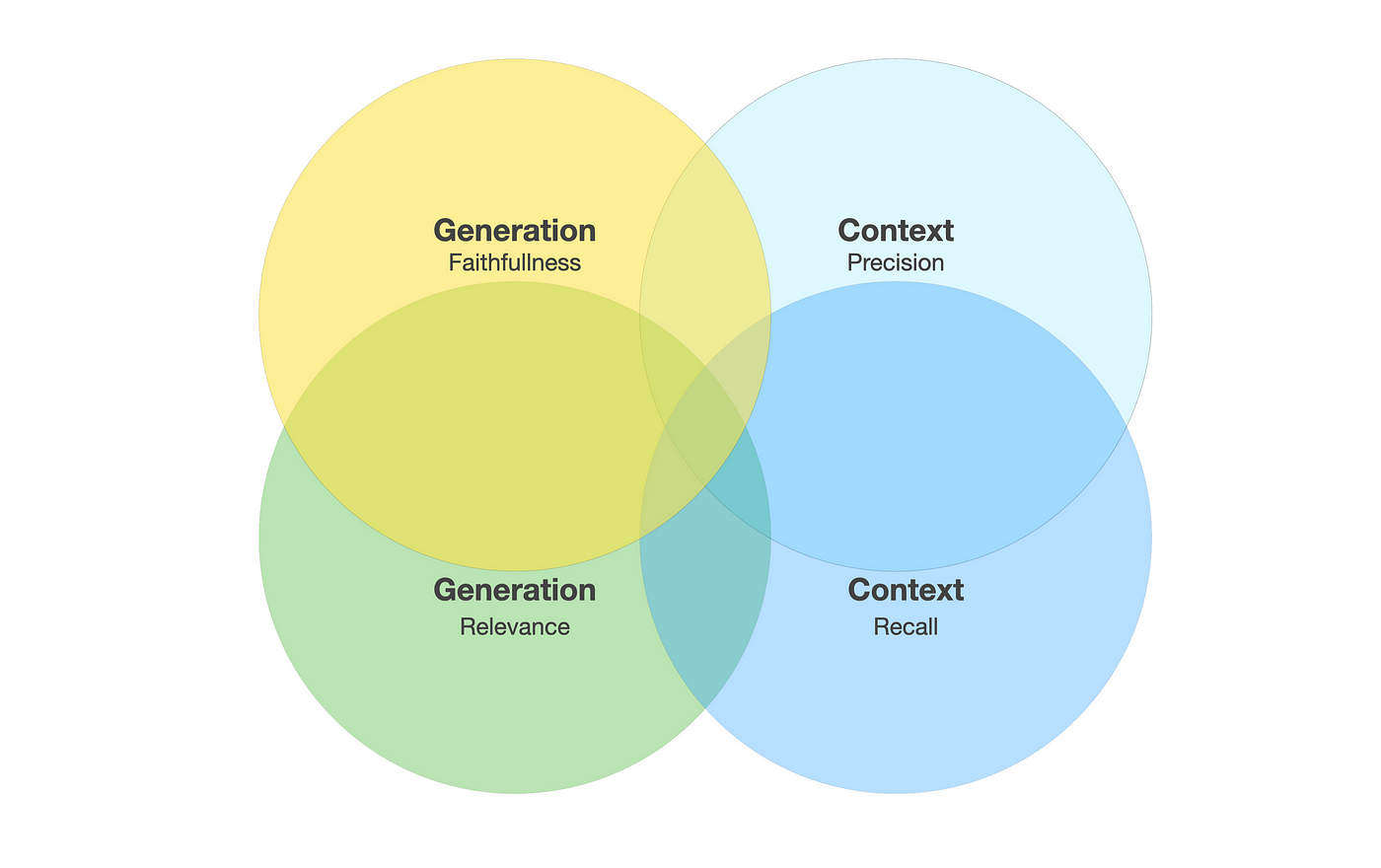

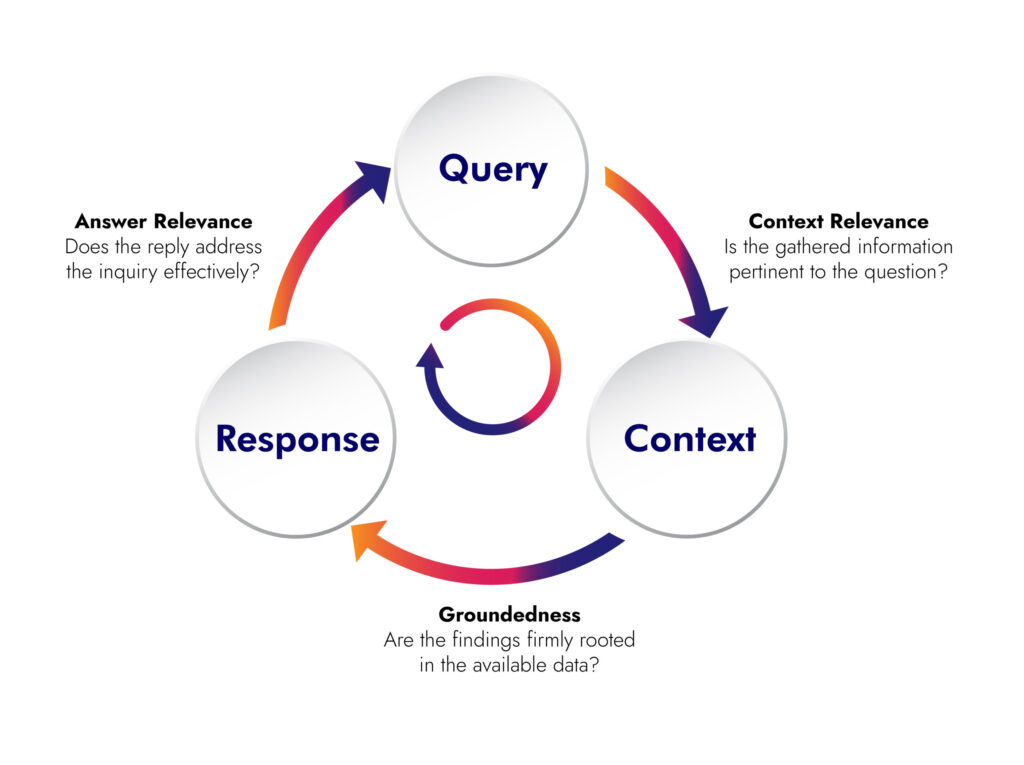

Rag Evaluation Metrics Starter Kit Retrieval augmented generation (rag) is a technique used to enrich llm outputs by using additional relevant information from an external knowledge base. this allows an llm to generate responses based on context beyond the scope of its training data. This guide breaks down how to evaluate and test rag systems. you'll learn how to evaluate retrieval and generation quality, build test sets with synthetic data, run experiments, and monitor in production. Evaluation metrics help check if the system retrieves relevant information, gives accurate answers and meets performance goals while also guiding improvements and model comparisons. evaluating a rag system means checking how well it retrieves and generates accurate, relevant and grounded responses. 1. This blog provides a simple, step by step guide to evaluating llm based rag systems, covering why their assessment is complex, the unique challenges of rag, key metrics, practical tools, and the future possibilities of ai validation.

Rag Evaluation Essentials Konverge Ai Evaluation metrics help check if the system retrieves relevant information, gives accurate answers and meets performance goals while also guiding improvements and model comparisons. evaluating a rag system means checking how well it retrieves and generates accurate, relevant and grounded responses. 1. This blog provides a simple, step by step guide to evaluating llm based rag systems, covering why their assessment is complex, the unique challenges of rag, key metrics, practical tools, and the future possibilities of ai validation. Evaluating the performance of rag systems requires measuring retrieval accuracy and answer quality. learn metrics, testing methods, and monitoring practices. Learn how to evaluate rag systems with proven evaluation metrics for retrieval, generation, and end to end quality. It's clearly time to evaluate your rag system, but how do you do that? in this article, you'll learn how to measure rag system performance across retrieval and generation stages, frameworks that automate evaluation at scale, and production practices that catch failures before users do. Learn how to evaluate rag systems by measuring retrieval precision and generation coherence using metrics like precision, recall, mrr, rouge l, and tools such as deepeval and ragas.