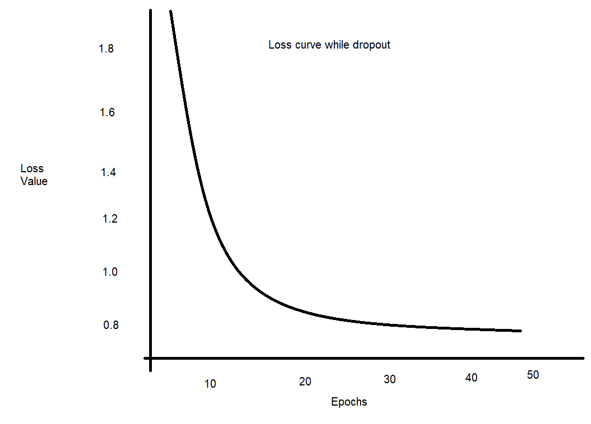

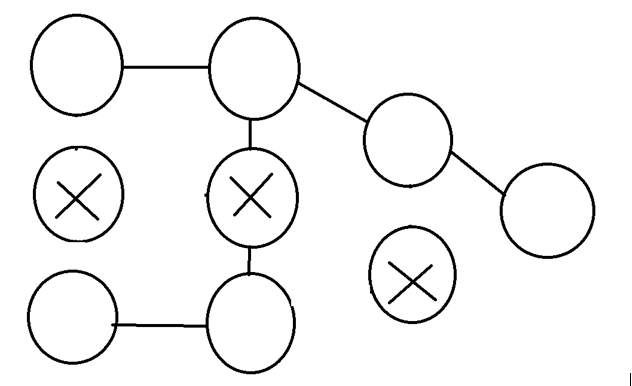

Keras Dropout How To Use Keras Dropout With Its Model In this i'm gonna show how to avoid a common problem in the process of training a deep learning model, which is called overfitting, this happens when the model learns too much about the dataset. The dropout layer randomly sets input units to 0 with a frequency of rate at each step during training time, which helps prevent overfitting. inputs not set to 0 are scaled up by 1 (1 rate) such that the sum over all inputs is unchanged.

Keras Dropout How To Use Keras Dropout With Its Model Training deep learning models for too long on the same data can lead to overfitting where the model performs well on training data but poorly on unseen data. dropout regularization helps overcome this by randomly deactivating a portion of neurons during training hence forcing the model to learn more robust and independent features. Dropout regularization is a computationally cheap way to regularize a deep neural network. dropout works by probabilistically removing, or “dropping out,” inputs to a layer, which may be input variables in the data sample or activations from a previous layer. Learn how to use dropout layers in keras to prevent overfitting in neural networks and enhance model performance. In this article, i’ll walk you through how i use these two techniques in keras to curb overfitting, along with examples, graphs, and the logic behind every line of code.

Keras Dropout How To Use Keras Dropout With Its Model Learn how to use dropout layers in keras to prevent overfitting in neural networks and enhance model performance. In this article, i’ll walk you through how i use these two techniques in keras to curb overfitting, along with examples, graphs, and the logic behind every line of code. One such technique they came up with was dropout regularization, in which neurons in the model are removed at random. in this article, we will explore how dropout regularization works, how you can implement it in your own model, as well as its benefits and disadvantages when compared to other methods. In this code, we've built a cnn model for image classification using the cifar 10 dataset, with dropout layers to prevent overfitting. dropout layers are essential in training deep models, especially when working with relatively small datasets or complex models that have a high risk of overfitting. In keras, you can introduce dropout in a network via the tf.keras.layers.dropout layer, which gets applied to the output of layer right before. add two dropout layers to your network to check how well they do at reducing overfitting:. Learn practical regularization techniques in keras to minimize overfitting. explore l1, l2, dropout, and early stopping methods with hands on examples for improved model performance. apply dropout at 20 50% on hidden layers and measure validation loss.