A Survey On Efficient Inference For Large Language Models To mitigate the financial burden and alleviate constraints imposed by hardware resources, optimizing inference performance is necessary. in this paper, we introduce an easily deployable inference performance optimization solution aimed at accelerating llms on cpus. To mitigate the financial burden and alleviate constraints imposed by hardware resources, optimizing inference performance is necessary. in this paper, we introduce an easily deployable.

Distributed Inference Performance Optimization For Llms On Cpus At first glance, deploying large language models outside of gpu rich datacenters feels daunting, yet the work here tackles that exact problem by focusing on inference performance in constrained environments and the high cost of hardware resources. To reduce the hardware limitation burden, we proposed an efficient distributed inference optimization solution for llms on cpus. To mitigate the financial burden and alleviate constraints imposed by hardware resources, optimizing inference performance is necessary. in this paper, we introduce an easily deployable inference performance optimization solution aimed at accelerating llms on cpus.

Inference Performance Optimization For Large Language Models On Cpus To mitigate the financial burden and alleviate constraints imposed by hardware resources, optimizing inference performance is necessary. in this paper, we introduce an easily deployable inference performance optimization solution aimed at accelerating llms on cpus.

Inference Performance Optimization For Large Language Models On Cpus

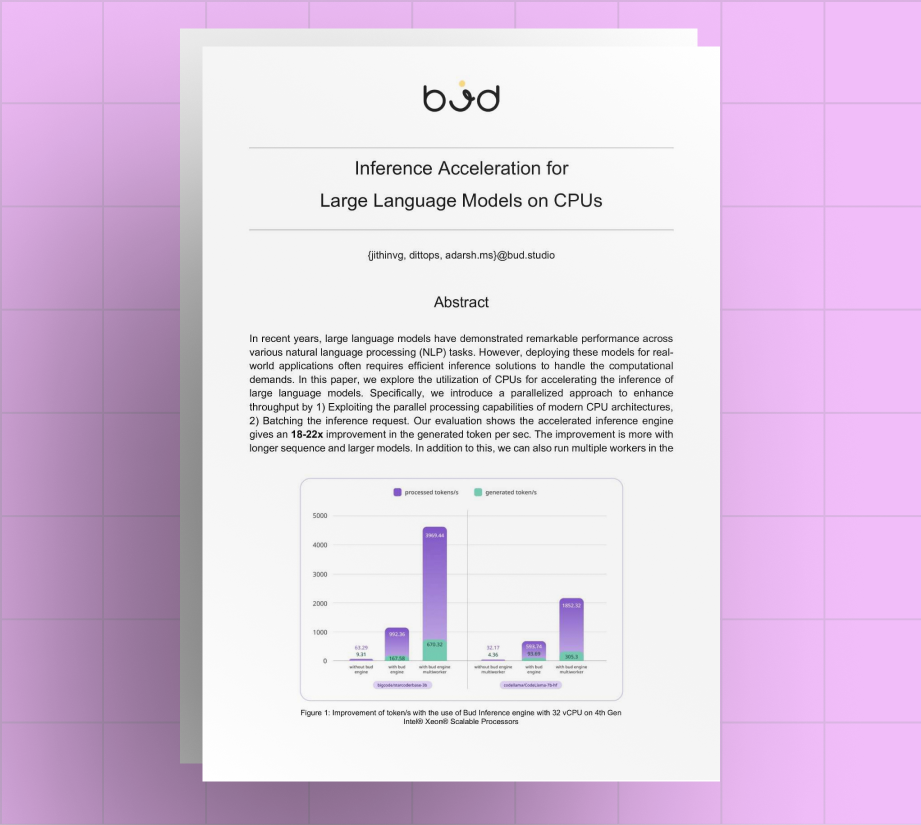

Inference Acceleration For Large Language Models On Cpus Budecosystem

Distributed Inference Performance Optimization For Llms On Cpus Ai