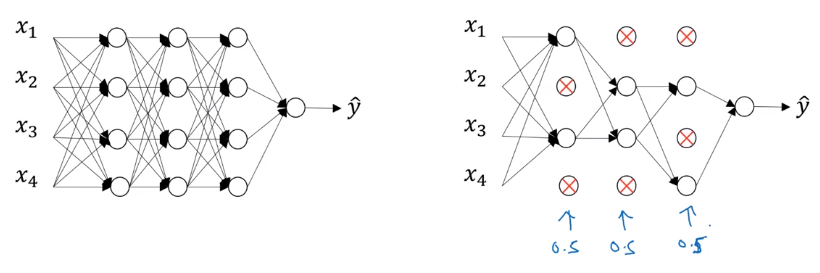

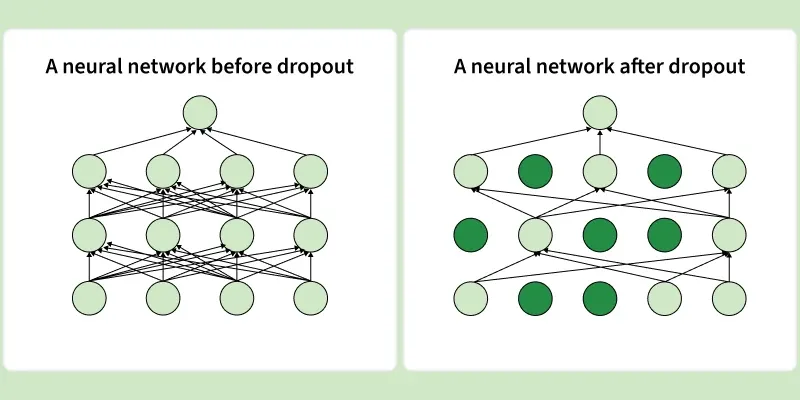

Dropout Regularization Improving Deep Neural Networks Hyperparameter Training deep learning models for too long on the same data can lead to overfitting where the model performs well on training data but poorly on unseen data. dropout regularization helps overcome this by randomly deactivating a portion of neurons during training hence forcing the model to learn more robust and independent features. In machine learning, “dropout” refers to the practice of disregarding certain nodes in a layer at random during training. a dropout regularization in deep learning is a regularization approach that prevents overfitting by ensuring that no units are codependent with one another.

Dropout Regularization In Deep Learning We explain various dropout methods, from standard random dropout to autodrop dropout (from the original to the advanced), and also discuss their performance and experimental capabilities. Dropout is a simple yet powerful regularization technique introduced by srivastava et al. in 2014. the core idea is to randomly “drop out” a subset of neurons during each training iteration. Following our look at weight regularization techniques like l1 and l2, which penalize large weight values, we now turn to a distinctly different method for combating overfitting: dropout. this technique works by randomly setting a fraction of neuron outputs to zero during each training update. The commonly applied method in a deep neural network, you might have heard, are regularization and dropout. in this article, we will together understand these 2 methods and implement them in python.

031 Dropout Regularization Following our look at weight regularization techniques like l1 and l2, which penalize large weight values, we now turn to a distinctly different method for combating overfitting: dropout. this technique works by randomly setting a fraction of neuron outputs to zero during each training update. The commonly applied method in a deep neural network, you might have heard, are regularization and dropout. in this article, we will together understand these 2 methods and implement them in python. Dropout is a regularization technique used in neural networks to prevent overfitting. it works by randomly setting a fraction of the input units to 0 at each update during training, which helps to reduce the co adaptation of neurons and makes the network more robust. In this section, we want to show dropout can be used as a regularization technique for deep neural networks. it can reduce the overfitting and make our network perform better on test set (like l1 and l2 regularization we saw in am207 lectures). This article explores dropout regularization, how it works, and why it’s a powerful tool for training deep learning models. what is dropout regularization? dropout is a regularization technique where, during the training phase, a subset of neurons is deactivated randomly or “dropped out” or ignored in each forward and backward pass. Learn about dropout regularization in deep learning, its benefits, implementation techniques, and how it enhances model performance.

Dropout Regularization In Deep Learning Geeksforgeeks Dropout is a regularization technique used in neural networks to prevent overfitting. it works by randomly setting a fraction of the input units to 0 at each update during training, which helps to reduce the co adaptation of neurons and makes the network more robust. In this section, we want to show dropout can be used as a regularization technique for deep neural networks. it can reduce the overfitting and make our network perform better on test set (like l1 and l2 regularization we saw in am207 lectures). This article explores dropout regularization, how it works, and why it’s a powerful tool for training deep learning models. what is dropout regularization? dropout is a regularization technique where, during the training phase, a subset of neurons is deactivated randomly or “dropped out” or ignored in each forward and backward pass. Learn about dropout regularization in deep learning, its benefits, implementation techniques, and how it enhances model performance.

Github Mohcinemadkour Dropout For Deep Learning Regularization This article explores dropout regularization, how it works, and why it’s a powerful tool for training deep learning models. what is dropout regularization? dropout is a regularization technique where, during the training phase, a subset of neurons is deactivated randomly or “dropped out” or ignored in each forward and backward pass. Learn about dropout regularization in deep learning, its benefits, implementation techniques, and how it enhances model performance.

Dropout Regularization In Deep Learning