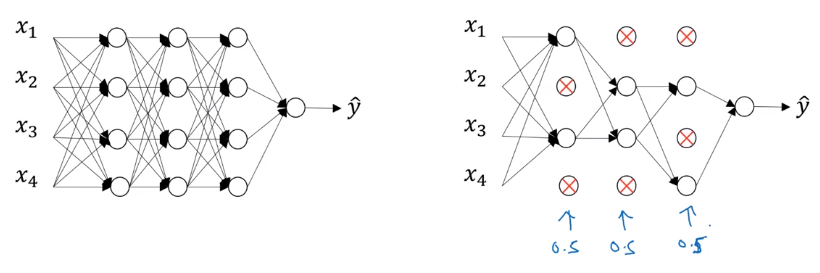

Dropout Regularization Improving Deep Neural Networks Hyperparameter In deep learning, dropout regularization is used to randomly drop neurons from hidden layers and this helps with generalization. in this video, we will see a theory behind dropout. Dropout is a regularization technique that prevents overfitting by randomly setting a fraction of input units to zero during training. this means that during each training step, some neurons are randomly dropped out of the network, which forces the network to learn redundant representations.

Dropout Regularization Exercise Improving Deep Neural Networks Dropout is a simple and powerful regularization technique for neural networks and deep learning models. in this post, you will discover the dropout regularization technique and how to apply it to your models in python with keras. Deep learning series for beginners. tensorflow tutorials, tensorflow 2.0 tutorial. deep learning tutorial python. deep learning keras tf tutorial 13 dropout layer dropout regularization ann.ipynb at master · codebasics deep learning keras tf tutorial. The dropout layer randomly sets input units to 0 with a frequency of rate at each step during training time, which helps prevent overfitting. inputs not set to 0 are scaled up by 1 (1 rate) such that the sum over all inputs is unchanged. In this blog post, we cover how to implement keras based neural networks with dropout. we do so by first recalling the basics of dropout, to understand at a high level what we’re working with.

Dropout Regularization In Deep Learning The dropout layer randomly sets input units to 0 with a frequency of rate at each step during training time, which helps prevent overfitting. inputs not set to 0 are scaled up by 1 (1 rate) such that the sum over all inputs is unchanged. In this blog post, we cover how to implement keras based neural networks with dropout. we do so by first recalling the basics of dropout, to understand at a high level what we’re working with. A step by step tutorial to use l2 regularization and dropout to reduce overfitting of a neural network model. Let's break down the steps needed to implement dropout and regularization in a neural network using tensorflow. we will create a simple feedforward neural network and apply dropout regularization. Whether you’re building a small neural network or training a deep learning model, adding dropout can significantly improve your results with minimal effort. it’s an easy to use method that brings real improvements to how well your models generalize. Discover dropout regularization, its implementation, hyperparameters, & drawbacks, along with other popular techniques to combat overfitting.

Dropout Regularization In Deep Learning A step by step tutorial to use l2 regularization and dropout to reduce overfitting of a neural network model. Let's break down the steps needed to implement dropout and regularization in a neural network using tensorflow. we will create a simple feedforward neural network and apply dropout regularization. Whether you’re building a small neural network or training a deep learning model, adding dropout can significantly improve your results with minimal effort. it’s an easy to use method that brings real improvements to how well your models generalize. Discover dropout regularization, its implementation, hyperparameters, & drawbacks, along with other popular techniques to combat overfitting.

031 Dropout Regularization Whether you’re building a small neural network or training a deep learning model, adding dropout can significantly improve your results with minimal effort. it’s an easy to use method that brings real improvements to how well your models generalize. Discover dropout regularization, its implementation, hyperparameters, & drawbacks, along with other popular techniques to combat overfitting.