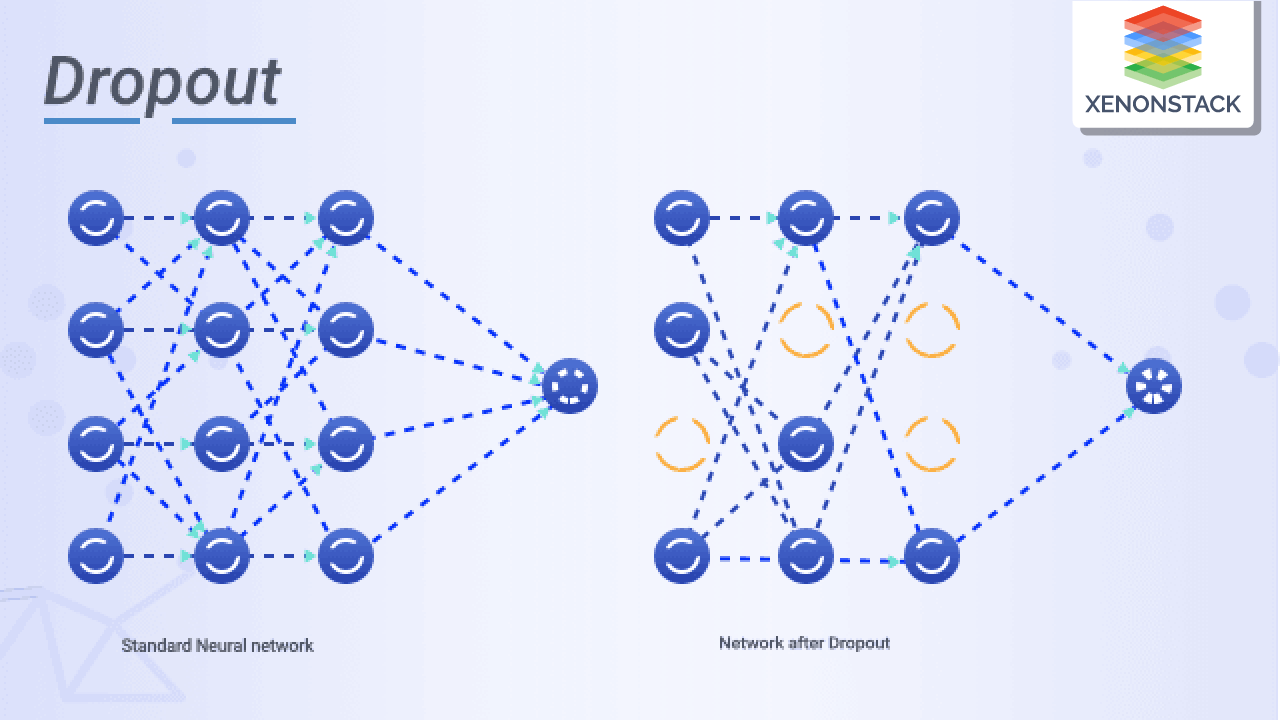

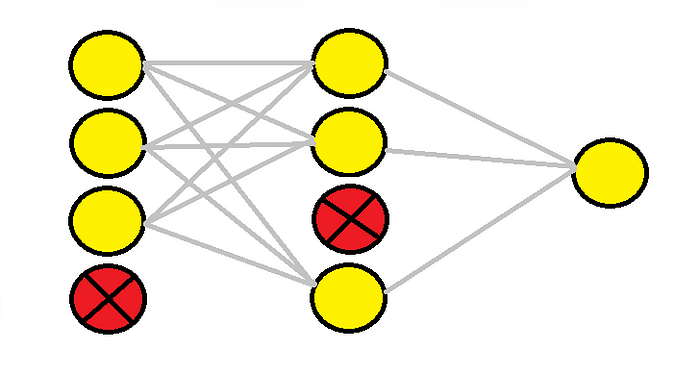

Dropout Regularization Improving Deep Neural Networks Hyperparameter Dropout rate controls how many neurons are dropped. remaining active neurons are scaled to maintain output stability. reduces co dependency among neurons and improves generalization. dropout works by randomly turning off a fraction of neurons during each training pass. When we apply dropout to a neural network, we’re creating a “thinned” network with unique combinations of the units in the hidden layers being dropped randomly at different points in time during.

What Is Dropout Regularization Technique Dropout is a technique for addressing overfitting in deep neural nets by randomly dropping units during training. it improves the performance of neural networks on various tasks and data sets, and can be approximated by using smaller weights at test time. Dropout is a regularization technique widely used in training artificial neural networks to mitigate overfitting. it consists of dynamically deactivating subsets of the network during training to promote more robust representations. Dropout is one of the most popular regularization methods in the scholarly domain for preventing a neural network model from overfitting in the training phase. In this article, we will explore how dropout regularization works, how you can implement it in your own model, as well as its benefits and disadvantages when compared to other methods.

Dropout Regularization In Deep Learning Dropout is one of the most popular regularization methods in the scholarly domain for preventing a neural network model from overfitting in the training phase. In this article, we will explore how dropout regularization works, how you can implement it in your own model, as well as its benefits and disadvantages when compared to other methods. Dropout is a powerful regularization technique designed to prevent overfitting in deep learning models. proposed by geoffrey hinton and his colleagues in 2012, it takes a computationally inexpensive yet ingenious approach specifically designed for neural networks. Learn how to use dropout, a simple and effective method to reduce overfitting and improve generalization in deep neural networks. dropout randomly drops out nodes during training, simulating training multiple models with different architectures. What is dropout regularization? dropout is a regularization technique where, during the training phase, a subset of neurons is deactivated randomly or “dropped out” or ignored in each forward and backward pass. Dropout regularization combats overfitting by randomly dropping out a certain percentage of neurons in a neural network during each training iteration. by doing so, the network is forced to learn robust and redundant representations of the input data.

Dropout Regularization With Tensorflow Keras Comet Dropout is a powerful regularization technique designed to prevent overfitting in deep learning models. proposed by geoffrey hinton and his colleagues in 2012, it takes a computationally inexpensive yet ingenious approach specifically designed for neural networks. Learn how to use dropout, a simple and effective method to reduce overfitting and improve generalization in deep neural networks. dropout randomly drops out nodes during training, simulating training multiple models with different architectures. What is dropout regularization? dropout is a regularization technique where, during the training phase, a subset of neurons is deactivated randomly or “dropped out” or ignored in each forward and backward pass. Dropout regularization combats overfitting by randomly dropping out a certain percentage of neurons in a neural network during each training iteration. by doing so, the network is forced to learn robust and redundant representations of the input data.