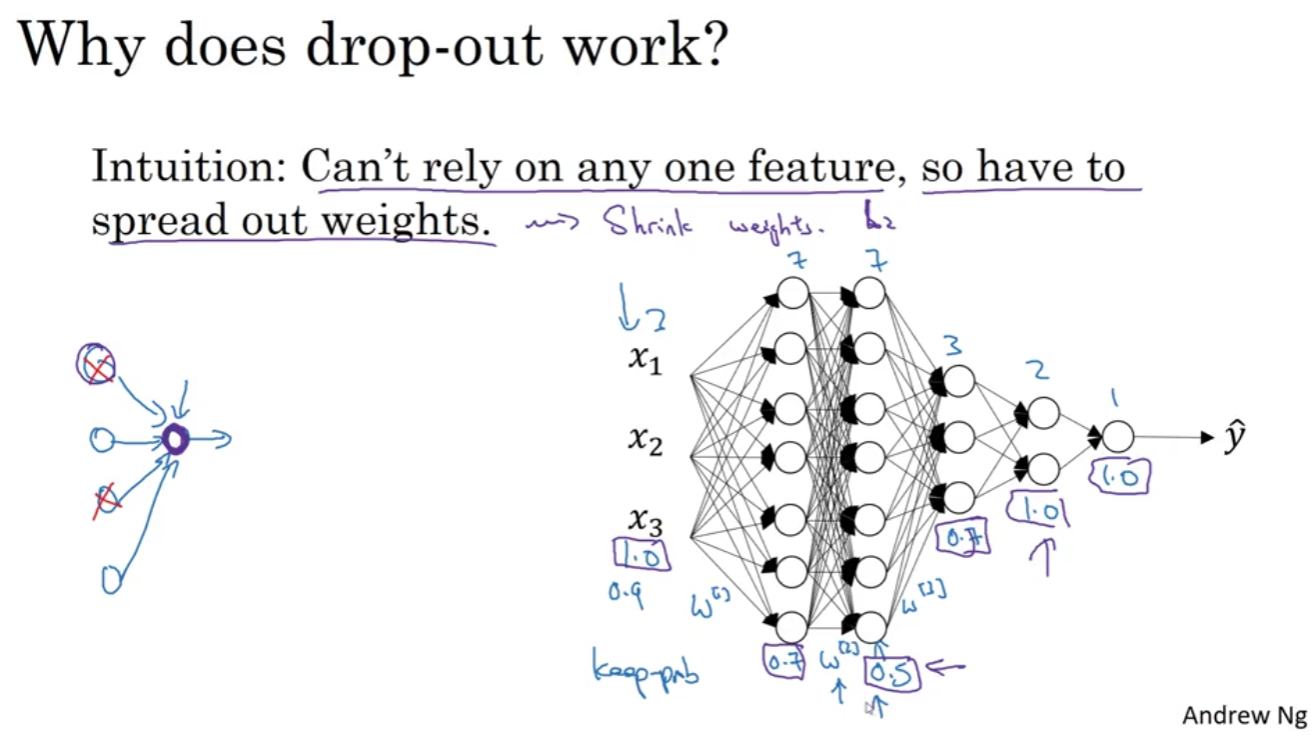

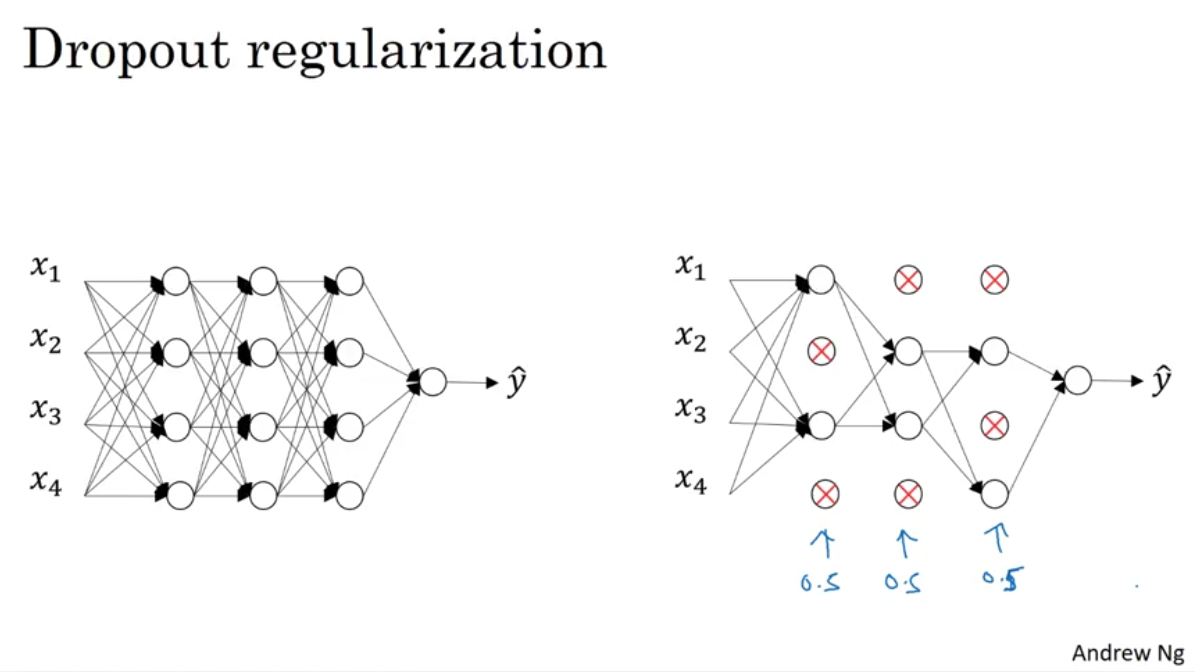

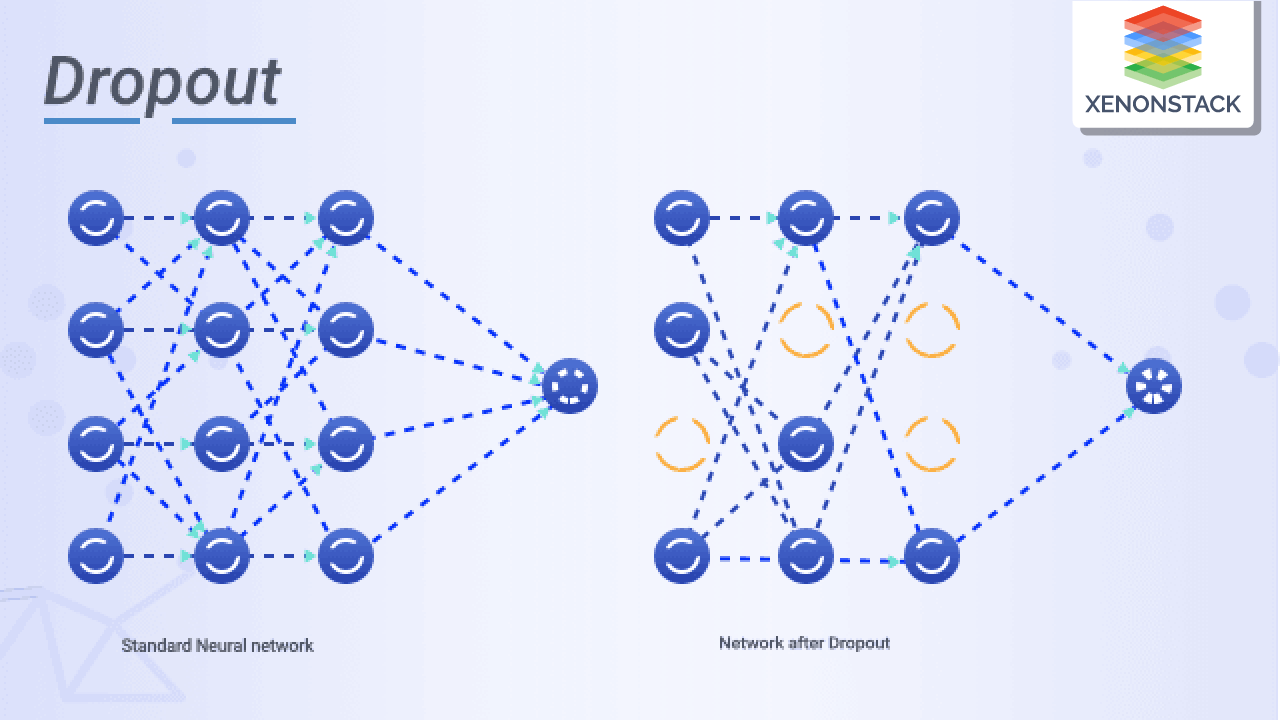

Dnn Dropout Regularization Dropout rate controls how many neurons are dropped. remaining active neurons are scaled to maintain output stability. reduces co dependency among neurons and improves generalization. dropout works by randomly turning off a fraction of neurons during each training pass. We explain various dropout methods, from standard random dropout to autodrop dropout (from the original to the advanced), and also discuss their performance and experimental capabilities.

Dnn Dropout Regularization This is called dropout and offers a very computationally cheap and remarkably effective regularization method to reduce overfitting and improve generalization error in deep neural networks of all kinds. Dropout is a regularization technique for neural networks that randomly sets a fraction of neuron activations to zero during training. proposed by geoffrey hinton et al. in 2012 [1] and described in full detail by nitish srivastava, hinton, alex krizhevsky, ilya sutskever, and ruslan salakhutdinov in a 2014 jmlr paper [2], dropout became one of. Dropout is a powerful regularization technique designed to prevent overfitting in deep learning models. proposed by geoffrey hinton and his colleagues in 2012, it takes a computationally inexpensive yet ingenious approach specifically designed for neural networks. Improve the generalization ability of deep neural networks. we cast the proposed approach in the form of regular convolutional neural network (cnn) weight layer.

Dropout Regularization Improving Deep Neural Networks Hyperparameter Dropout is a powerful regularization technique designed to prevent overfitting in deep learning models. proposed by geoffrey hinton and his colleagues in 2012, it takes a computationally inexpensive yet ingenious approach specifically designed for neural networks. Improve the generalization ability of deep neural networks. we cast the proposed approach in the form of regular convolutional neural network (cnn) weight layer. What’s dropout? in machine learning, “dropout” refers to the practice of disregarding certain nodes in a layer at random during training. a dropout regularization in deep learning is a regularization approach that prevents overfitting by ensuring that no units are codependent with one another. In this article, we’ll delve into three popular regularization methods: dropout, l norm regularization, and batch normalization. we’ll explore each technique’s intuition, implementation using. Dropout is a powerful and widely used regularization technique in deep learning. by randomly deactivating neurons during training, dropout prevents overfitting and improves the generalization of neural networks. Learn how to effectively combine batch normalization and dropout as regularizers in neural networks. explore the challenges, best practices, and scenarios.

What Is Dropout Regularization Technique What’s dropout? in machine learning, “dropout” refers to the practice of disregarding certain nodes in a layer at random during training. a dropout regularization in deep learning is a regularization approach that prevents overfitting by ensuring that no units are codependent with one another. In this article, we’ll delve into three popular regularization methods: dropout, l norm regularization, and batch normalization. we’ll explore each technique’s intuition, implementation using. Dropout is a powerful and widely used regularization technique in deep learning. by randomly deactivating neurons during training, dropout prevents overfitting and improves the generalization of neural networks. Learn how to effectively combine batch normalization and dropout as regularizers in neural networks. explore the challenges, best practices, and scenarios.

Dropout Regularization Exercise Improving Deep Neural Networks Dropout is a powerful and widely used regularization technique in deep learning. by randomly deactivating neurons during training, dropout prevents overfitting and improves the generalization of neural networks. Learn how to effectively combine batch normalization and dropout as regularizers in neural networks. explore the challenges, best practices, and scenarios.

S10 Dnn Regularization Wip Pdf Artificial Neural Network