Distributed Processing Using Ray Framework In Python Datacamp In this blog, we explored the power of distributed processing using the ray framework in python. ray provides a simple and flexible solution for parallelizing ai and python applications, allowing us to leverage the collective power of multiple machines or computing resources. This repository provides a practical hands on guide to building scalable distributed applications with ray, a unified framework for scaling ai and python applications.

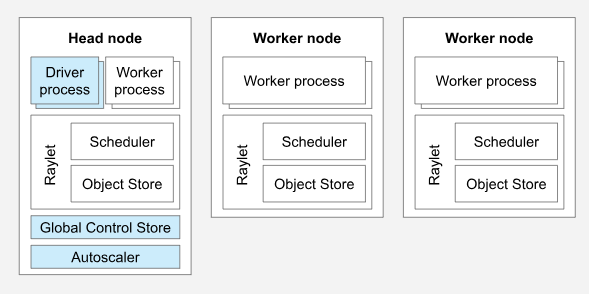

Distributed Processing Using Ray Framework In Python Datacamp Ray is an open source, high performance distributed execution framework primarily designed for scalable and parallel python and machine learning applications. it enables developers to easily scale python code from a single machine to a cluster without needing to change much code. This is the first in a two part series on distributed computing using ray. this part shows how to use ray on your local pc, and part 2 shows how to scale ray to multi server clusters in the cloud. Build ml applications with a toolkit of libraries for distributed data processing, model training, tuning, reinforcement learning, model serving, and more. This is where ray steps in — a powerful, open source framework designed to simplify distributed programming and enable scalable parallel and distributed applications.

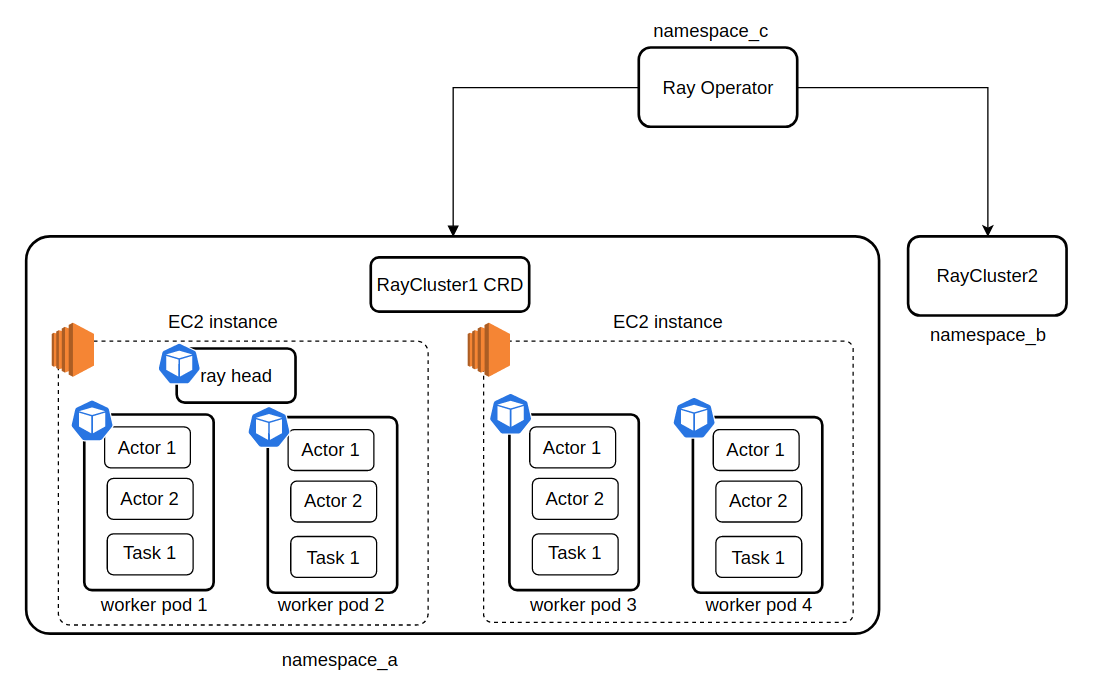

Distributed Processing Using Ray Framework In Python Datacamp Build ml applications with a toolkit of libraries for distributed data processing, model training, tuning, reinforcement learning, model serving, and more. This is where ray steps in — a powerful, open source framework designed to simplify distributed programming and enable scalable parallel and distributed applications. We use ray to handle large scale workloads that require parallel processing or distributed computing, such as training massive machine learning models, tuning hyperparameters, serving models in production, or processing big datasets. This module guides you through distributed model training with ray. through fine tuning a transformer for a computer vision task, ml practitioners will learn how to scale training workloads. It enables users to effortlessly parallelize and scale python code across multiple cpus or gpus, making it ideal for building machine learning models, data processing pipelines, reinforcement learning algorithms, and real time decision making systems. In this blog post, we'll examine one such open source distributed computing framework: ray. we'll also look at kuberay, a kubernetes operator that enables seamless ray integration with kubernetes clusters for distributed computing in cloud native environments.