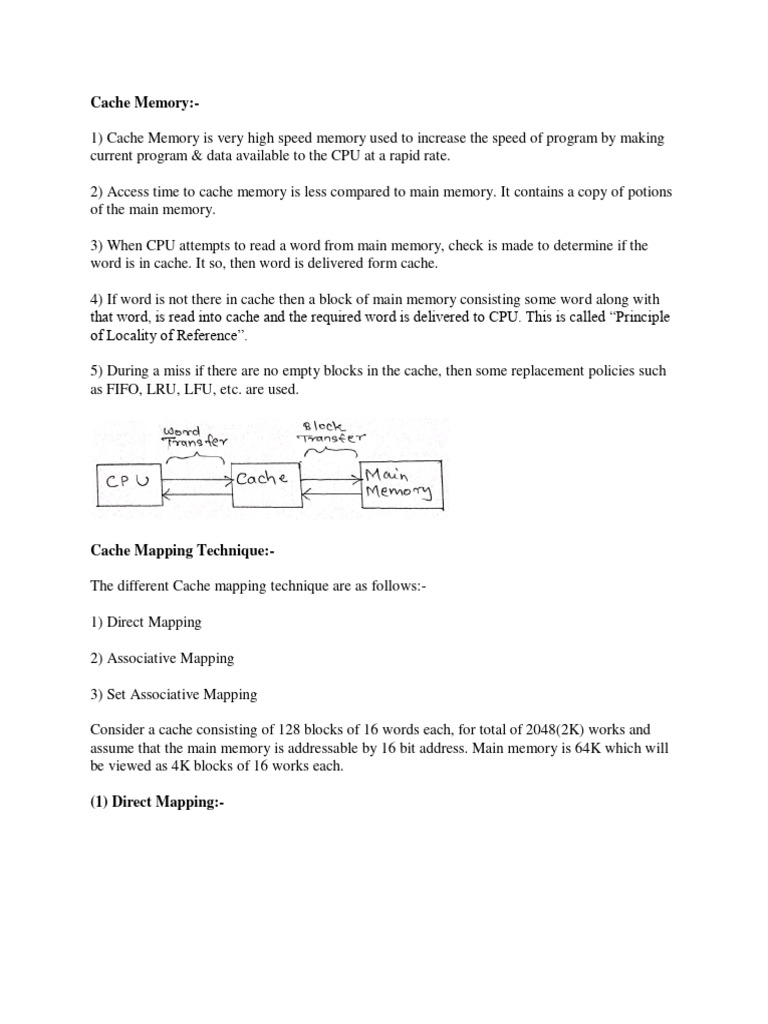

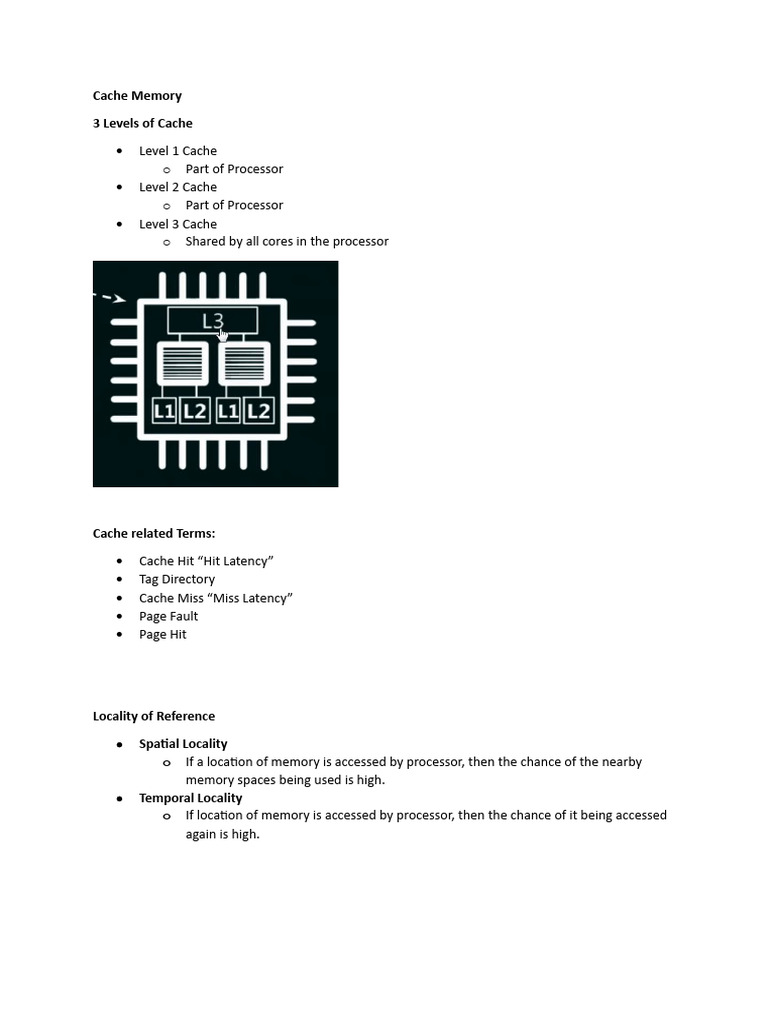

Cache Solutions Pdf Cpu Cache Data Fallsem2020 21 cse2001 th vl2020210104491 reference material i 29 oct 2020 cache memory solved problems free download as pdf file (.pdf), text file (.txt) or read online for free. this document provides information about a computer architecture and organization course. Answer: a n way set associative cache is like having n direct mapped caches in parallel.

Cache Memory Pdf Cpu Cache Information Technology Cs 0019 21st february 2024 (lecture notes derived from material from phil gibbons, randy bryant, and dave o’hallaron) 1 ¢ cache memories are small, fast sram based memories managed automatically in hardware § hold frequently accessed blocks of main memory. Advantage: lower bookkeeping overhead a cache line has 8 byte of address and 64 byte of data exploits spatial locality accessing location x causes 64 bytes around x to be cached. It can introduce cache pollution – prefetched data is often placed in a separate prefetch buffer to avoid pollution – this buffer must be looked up in parallel with the cache access. Caches are a mechanism to reduce memory latency based on the empirical observation that the patterns of memory references made by a processor are often highly predictable:.

Cache Memory Pdf Cpu Cache Computer Engineering It can introduce cache pollution – prefetched data is often placed in a separate prefetch buffer to avoid pollution – this buffer must be looked up in parallel with the cache access. Caches are a mechanism to reduce memory latency based on the empirical observation that the patterns of memory references made by a processor are often highly predictable:. You are asked to optimize a cache capable of storing 8 bytes total for the given references. there are three direct mapped cache designs possible by varying the block size: c1 has one byte blocks, c2 has two byte blocks, and c3 has four byte blocks. Rather than treating cache as single monolithic block, divide into independent banks to support simultaneous accesses the arm cortex a8 supports one to four banks in its l2 cache;. Cache memory is the most important feature of modern processor design that ensure high performance. it is usually very fast – taking only 1 clock cycle to access. How should space be allocated to threads in a shared cache? should we store data in compressed format in some caches? how do we do better reuse prediction & management in caches?.