Cache Computing Pdf Cache Computing Cpu Cache Answer: a n way set associative cache is like having n direct mapped caches in parallel. The document outlines different cache design parameters like size, mapping function, replacement algorithm, write policy, and block size. it provides examples of cache implementations in intel and ibm processors.

Cache Memory Pdf Cpu Cache Information Technology Caches are a mechanism to reduce memory latency based on the empirical observation that the patterns of memory references made by a processor are often highly predictable:. When virtual addresses are used, the system designer may choose to place the cache between the processor and the mmu or between the mmu and main memory. a logical cache (virtual cache) stores data using virtual addresses. the processor accesses the cache directly, without going through the mmu. In computer architecture, almost everything is a cache! branch target bufer a cache on branch targets. most processors today have three levels of caches. one major design constraint for caches is their physical sizes on cpu die. limited by their sizes, we cannot have too many caches. Pdf | on oct 10, 2020, zeyad ayman and others published cache memory | find, read and cite all the research you need on researchgate.

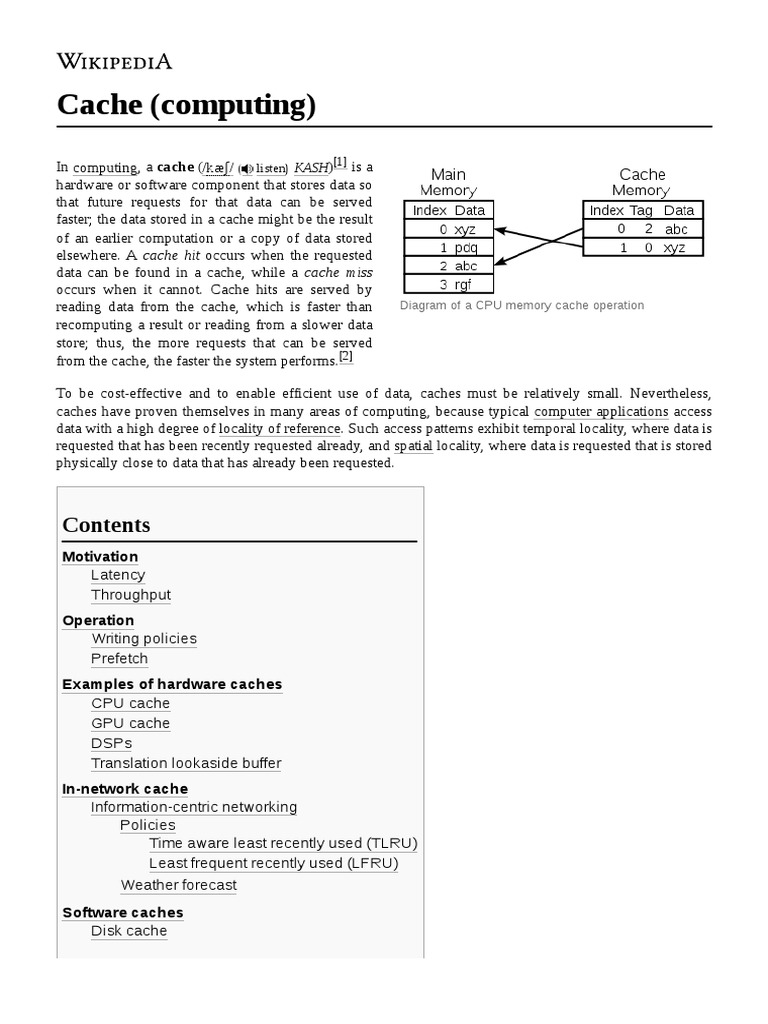

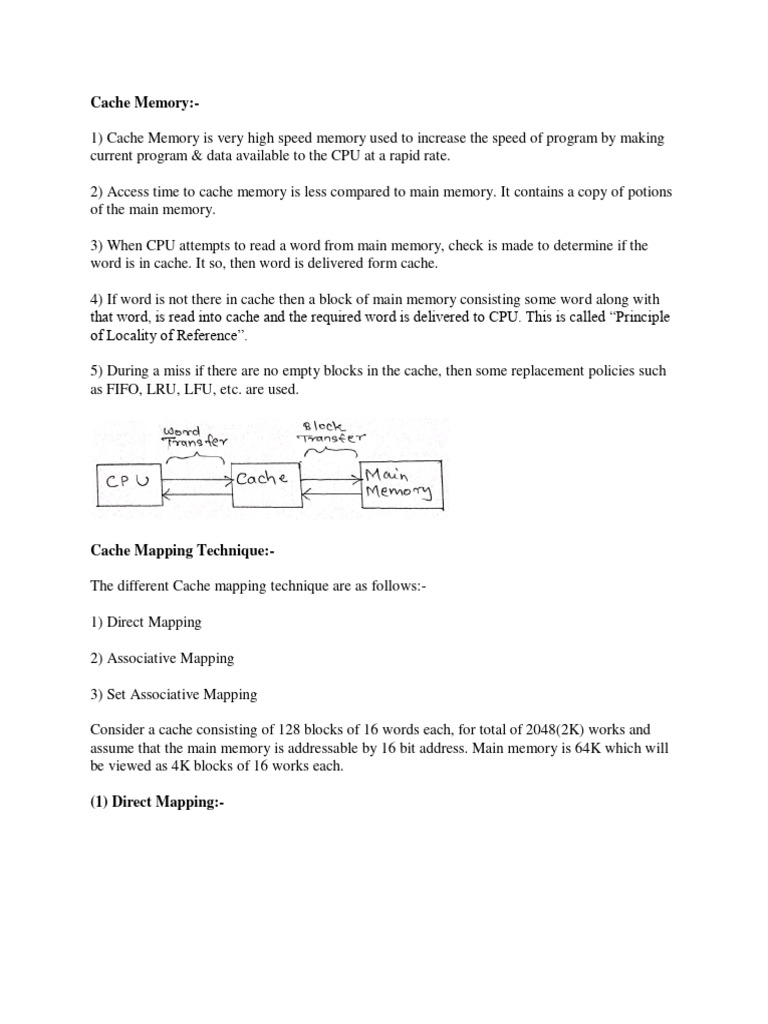

Lec18 Introduction To Cache Memory Pdf Cpu Cache Central What to do then? any ideas? typically, a computer has a hierarchy of memory subsystems:. A cpu cache is used by the cpu of a computer to reduce the average time to access memory. the cache is a smaller, faster and more expensive memory inside the cpu which stores copies of the data from the most frequently used main memory locations for fast access. Why do we cache? use caches to mask performance bottlenecks by replicating data closer. • cache memory is a small amount of fast memory. ∗ placed between two levels of memory hierarchy. » to bridge the gap in access times – between processor and main memory (our focus) – between main memory and disk (disk cache) ∗ expected to behave like a large amount of fast memory. 2003.